This is the multi-page printable view of this section. Click here to print.

Conversation

1 - Conversation overview

Alpha

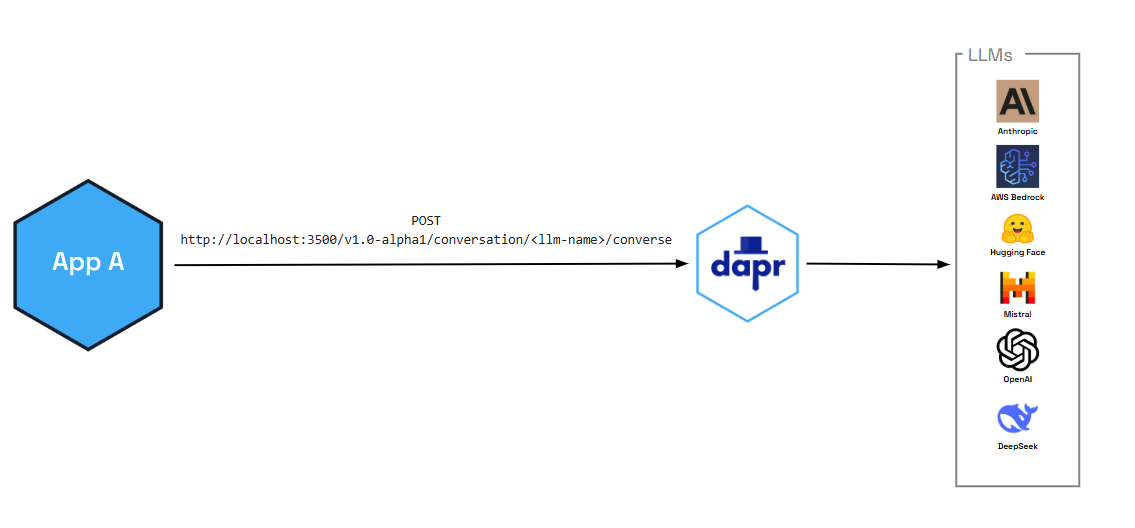

The conversation API is currently in alpha.Dapr’s conversation API reduces the complexity of securely and reliably interacting with Large Language Models (LLM) at scale. Whether you’re a developer who doesn’t have the necessary native SDKs or a polyglot shop who just wants to focus on the prompt aspects of LLM interactions, the conversation API provides one consistent API entry point to talk to underlying LLM providers.

In additon to enabling critical performance and security functionality (like prompt caching and PII scrubbing), you can also pair the conversation API with Dapr functionalities, like:

- Resiliency circuit breakers and retries to circumvent limit and token errors, or

- Middleware to authenticate requests coming to and from the LLM

Dapr provides observability by issuing metrics for your LLM interactions.

Features

The following features are out-of-the-box for all the supported conversation components.

Prompt caching

Prompt caching optimizes performance by storing and reusing prompts that are often repeated across multiple API calls. To significantly reduce latency and cost, Dapr stores frequent prompts in a local cache to be reused by your cluster, pod, or other, instead of reprocessing the information for every new request.

Personally identifiable information (PII) obfuscation

The PII obfuscation feature identifies and removes any form of sensitive user information from a conversation response. Simply enable PII obfuscation on input and output data to protect your privacy and scrub sensitive details that could be used to identify an individual.

The PII scrubber obfuscates the following user information:

- Phone number

- Email address

- IP address

- Street address

- Credit cards

- Social Security number

- ISBN

- Media Access Control (MAC) address

- Secure Hash Algorithm 1 (SHA-1) hex

- SHA-256 hex

- MD5 hex

Demo

Watch the demo presented during Diagrid’s Dapr v1.15 celebration to see how the conversation API works using the .NET SDK.

Try out conversation

Quickstarts and tutorials

Want to put the Dapr conversation API to the test? Walk through the following quickstart and tutorials to see it in action:

| Quickstart/tutorial | Description |

|---|---|

| Conversation quickstart | Learn how to interact with Large Language Models (LLMs) using the conversation API. |

Start using the conversation API directly in your app

Want to skip the quickstarts? Not a problem. You can try out the conversation building block directly in your application. After Dapr is installed, you can begin using the conversation API starting with the how-to guide.

Next steps

2 - How-To: Converse with an LLM using the conversation API

Alpha

The conversation API is currently in alpha.Let’s get started using the conversation API. In this guide, you’ll learn how to:

- Set up one of the available Dapr components (echo) that work with the conversation API.

- Add the conversation client to your application.

- Run the connection using

dapr run.

Set up the conversation component

Create a new configuration file called conversation.yaml and save to a components or config sub-folder in your application directory.

Select your preferred conversation component spec for your conversation.yaml file.

For this scenario, we use a simple echo component.

apiVersion: dapr.io/v1alpha1

kind: Component

metadata:

name: echo

spec:

type: conversation.echo

version: v1

Use the OpenAI component

To interface with a real LLM, use one of the other supported conversation components, including OpenAI, Hugging Face, Anthropic, DeepSeek, and more.

For example, to swap out the echo mock component with an OpenAI component, replace the conversation.yaml file with the following. You’ll need to copy your API key into the component file.

apiVersion: dapr.io/v1alpha1

kind: Component

metadata:

name: openai

spec:

type: conversation.openai

metadata:

- name: key

value: <REPLACE_WITH_YOUR_KEY>

- name: model

value: gpt-4-turbo

Connect the conversation client

The following examples use an HTTP client to send a POST request to Dapr’s sidecar HTTP endpoint. You can also use the Dapr SDK client instead.

using Dapr.AI.Conversation;

using Dapr.AI.Conversation.Extensions;

var builder = WebApplication.CreateBuilder(args);

builder.Services.AddDaprConversationClient();

var app = builder.Build();

var conversationClient = app.Services.GetRequiredService<DaprConversationClient>();

var response = await conversationClient.ConverseAsync("conversation",

new List<DaprConversationInput>

{

new DaprConversationInput(

"Please write a witty haiku about the Dapr distributed programming framework at dapr.io",

DaprConversationRole.Generic)

});

Console.WriteLine("Received the following from the LLM:");

foreach (var resp in response.Outputs)

{

Console.WriteLine($"\t{resp.Result}");

}

package main

import (

"context"

"fmt"

dapr "github.com/dapr/go-sdk/client"

"log"

)

func main() {

client, err := dapr.NewClient()

if err != nil {

panic(err)

}

input := dapr.ConversationInput{

Content: "Please write a witty haiku about the Dapr distributed programming framework at dapr.io",

// Role: "", // Optional

// ScrubPII: false, // Optional

}

fmt.Printf("conversation input: %s\n", input.Content)

var conversationComponent = "echo"

request := dapr.NewConversationRequest(conversationComponent, []dapr.ConversationInput{input})

resp, err := client.ConverseAlpha1(context.Background(), request)

if err != nil {

log.Fatalf("err: %v", err)

}

fmt.Printf("conversation output: %s\n", resp.Outputs[0].Result)

}

use dapr::client::{ConversationInputBuilder, ConversationRequestBuilder};

use std::thread;

use std::time::Duration;

type DaprClient = dapr::Client<dapr::client::TonicClient>;

#[tokio::main]

async fn main() -> Result<(), Box<dyn std::error::Error>> {

// Sleep to allow for the server to become available

thread::sleep(Duration::from_secs(5));

// Set the Dapr address

let address = "https://127.0.0.1".to_string();

let mut client = DaprClient::connect(address).await?;

let input = ConversationInputBuilder::new("Please write a witty haiku about the Dapr distributed programming framework at dapr.io").build();

let conversation_component = "echo";

let request =

ConversationRequestBuilder::new(conversation_component, vec![input.clone()]).build();

println!("conversation input: {:?}", input.content);

let response = client.converse_alpha1(request).await?;

println!("conversation output: {:?}", response.outputs[0].result);

Ok(())

}

Run the conversation connection

Start the connection using the dapr run command. For example, for this scenario, we’re running dapr run on an application with the app ID conversation and pointing to our conversation YAML file in the ./config directory.

dapr run --app-id conversation --dapr-grpc-port 50001 --log-level debug --resources-path ./config -- dotnet run

dapr run --app-id conversation --dapr-grpc-port 50001 --log-level debug --resources-path ./config -- go run ./main.go

Expected output

- '== APP == conversation output: Please write a witty haiku about the Dapr distributed programming framework at dapr.io'

dapr run --app-id=conversation --resources-path ./config --dapr-grpc-port 3500 -- cargo run --example conversation

Expected output

- 'conversation input: hello world'

- 'conversation output: hello world'

Advanced features

The conversation API supports the following features:

-

Prompt caching: Allows developers to cache prompts in Dapr, leading to much faster response times and reducing costs on egress and on inserting the prompt into the LLM provider’s cache.

-

PII scrubbing: Allows for the obfuscation of data going in and out of the LLM.

To learn how to enable these features, see the conversation API reference guide.

Related links

Try out the conversation API using the full examples provided in the supported SDK repos.